I received some feedback from my previous posts on computer graphics asking for a basic explanation of the differences between the two main ways of representing images in digital computer files, which are:

- Bitmap “paintings”

- Vector “drawings”

Most people probably view images on their computers (or phones, tablets or any other digital device with a pictorial interface) without giving any thought to how the image is stored and displayed in the computer. That’s fine if you’re just a user of images, but for those of us who want to create or manipulate computer graphic images, it’s important to understand the internal format of the files.

Bitmap Images

If you’ve ever taken or downloaded a digital photo, you’re already familiar with bitmap images, even if you weren’t aware that that’s what digital photos are.

A bitmap represents an image by treating the image area as a rectangle, and dividing up the rectangle into a two-dimensional array of tiny pixels. For example, an image produced by a high-resolution phone camera may have dimensions of 4128 pixels horizontally and 3096 pixels vertically, requiring 4128×3096 = 12,780,288 pixels for the entire image. (Bitmap images usually involve large numbers of pixels, but computers are really good at handling large numbers of items!) Each pixel specifies a single color value for the image at that point. The resulting image is displayed simply by copying (“blitting”) the array of pixels to the screen, with each pixel showing its defined color.

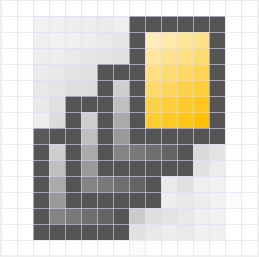

Some of the smallest bitmap images you’ll see are the icons used for programs and other items in computer user interfaces. The size of these bitmaps can be as small as 16×16 pixels, which provides very little detail, but is sufficient for images that will always be viewed in tiny sizes. Here’s one that I created for a user interface some time ago:

Enlarging this image enables you to see each individual pixel:

You can see the pixel boundaries here, and count them to confirm that (including the white pixels at the edges) the image is indeed 16×16 pixels.

Obviously, the enlarged image looks unacceptably crude, but, since the image would normally never be viewed at this level of magnification, it’s good enough for use as an icon. In most cases, such as digital photographs, there are so many pixels in the bitmap that your eye can’t distinguish them at normal viewing sizes, so you see the image as a continuous set of tones.

Bitmap images have a “resolution”, which limits the size to which you can magnify the image without visible degradation. Images with higher numbers of pixels have higher resolution.

Given that bitmap image files are usually large, it’s helpful to be able to be able to compress the pixel map in some way, and there are many well-known methods for doing this. The tradeoff is that, the more compression you apply, the worse the image tends to look. One of the best-known is JPEG (a standard created by the Joint Photographic Experts’ Group), which is intended to allow you to apply variable amounts of compression to digital photographs. However, it’s important to realize that bitmap image files are not necessarily compressed.

Programs that are designed to process bitmap images are referred to as “paint” programs. Well-known examples are: Adobe Photoshop and Corel PhotoPaint.

Vector Images

The alternative way of producing a computer image is to create a list of instructions describing how to draw the image, then store that list as the image file. When the file is opened, the computer interprets each instruction and redraws the complete image, usually as a bitmap for display purposes. This process is called rasterization.

This may seem to be an unnecessarily complex way to create a computer image. Wouldn’t it just be simpler to stick to bitmap images for everything? Well, it probably wouldn’t be a good idea to try to store a photo of your dog as a vector image, but it turns out that there are some cases where vector images are preferable to bitmap images. Part of the skill set of a digital artist is knowing which cases are best suited to vector images, and which to bitmaps.

There are many vector drawing standards, and many of those are proprietary (e.g., AI, CDR). One open vector drawing standard that’s becoming increasingly popular is SVG (Scalable Vector Graphics). You can view the contents of an SVG file by opening it with a text editor program (such as Notepad).

Here’s a very simple example of an SVG image file, consisting of a white cross on a red circle:

(Not all browsers can interpret SVG files, so I rendered the image above as a bitmap to ensure that you can see it!)

If you open the SVG file with a text editor, you can see the instructions that create the image shown above. In this case, the important instructions look like this:

<g id=”Layer_x0020_1″>

<circle class=”fil0″ cx=”2448″ cy=”6098″ r=”83″/>

<path class=”fil1″ d=”M2398 6053l5 -5c4,-4 13,-1 20,5l26 26 26 -26c7,-7 16,-9 20,-5l5 5c4,4 1,13 -5,20l-26 26 26 26c7,7 9,16 5,20l-5 5c-4,4 -13,1 -20,-5l-26 -26 -26 26c-7,7 -16,9 -20,5l-5 -5c-4,-4 -1,-13 5,-20l26 -26 -26 -26c-7,-7 -9,-16 -5,-20z”/>

</g>

As you’d expect, the instructions tell the computer to draw a “circle”, and then create the cross item by following the coordinates specified for the “path” item.

Of course, if you were to try to represent a photograph of your dog as a vector image, the resulting file would contain a huge number of instructions. That’s why bitmap images are usually preferable for digital photographs and other very complex scenes.

A major advantage of vector image formats is that the picture can be rendered at any size without degradation. Bitmap images have inherent resolutions, which vector images do not have.

Programs that are designed to process vector images are referred to as “drawing” programs. Well-known examples are: Adobe Illustrator and Corel Draw.

Converting Between Bitmap and Vector Images

It’s often necessary to convert a vector image into a bitmap image, and, less frequently, to convert a bitmap image into a vector image.

Conversion of vector images to bitmaps occurs all the time, every time you want to view the content of a vector image. When you open a vector image, the computer reads the instructions in the file, and draws the shapes into a temporary bitmap that it displays for you.

Converting bitmaps to vector images requires special software. The process is usually called “Tracing”. Years ago, you had to buy tracing software separately, but now most vector drawing software includes built-in tracing capabilities. As the name suggests, tracing software works by “drawing around” the edges of the bitmap, so that it creates shapes and lines representing the image. The result of the operation is that the software generates a set of mathematical curves that define the vector image.

Summary of the Pros and Cons

There are situations where bitmap images are preferable to vector images, and vice versa. Here’s a summary of the pros and cons of each type.

Bitmap

Advantages:

- Complex scenes can be depicted as easily as simple scenes.

- Significant compression is usually possible, at the expense of loss of quality.

- Rendering is computationally easy; requires minimal computing power.

Disadvantages:

- Size: Files tend to be large.

- Not scalable: attempting to magnify an image causes degradation.

Vector

Advantages:

- Compact: Files tend to be small.

- Scalable: images can be displayed at any resolution without degradation.

Disadvantages:

- Complex scenes are difficult to encode, which tends to create very large files.

- Rendering is computationally intensive; requires significant computing power.